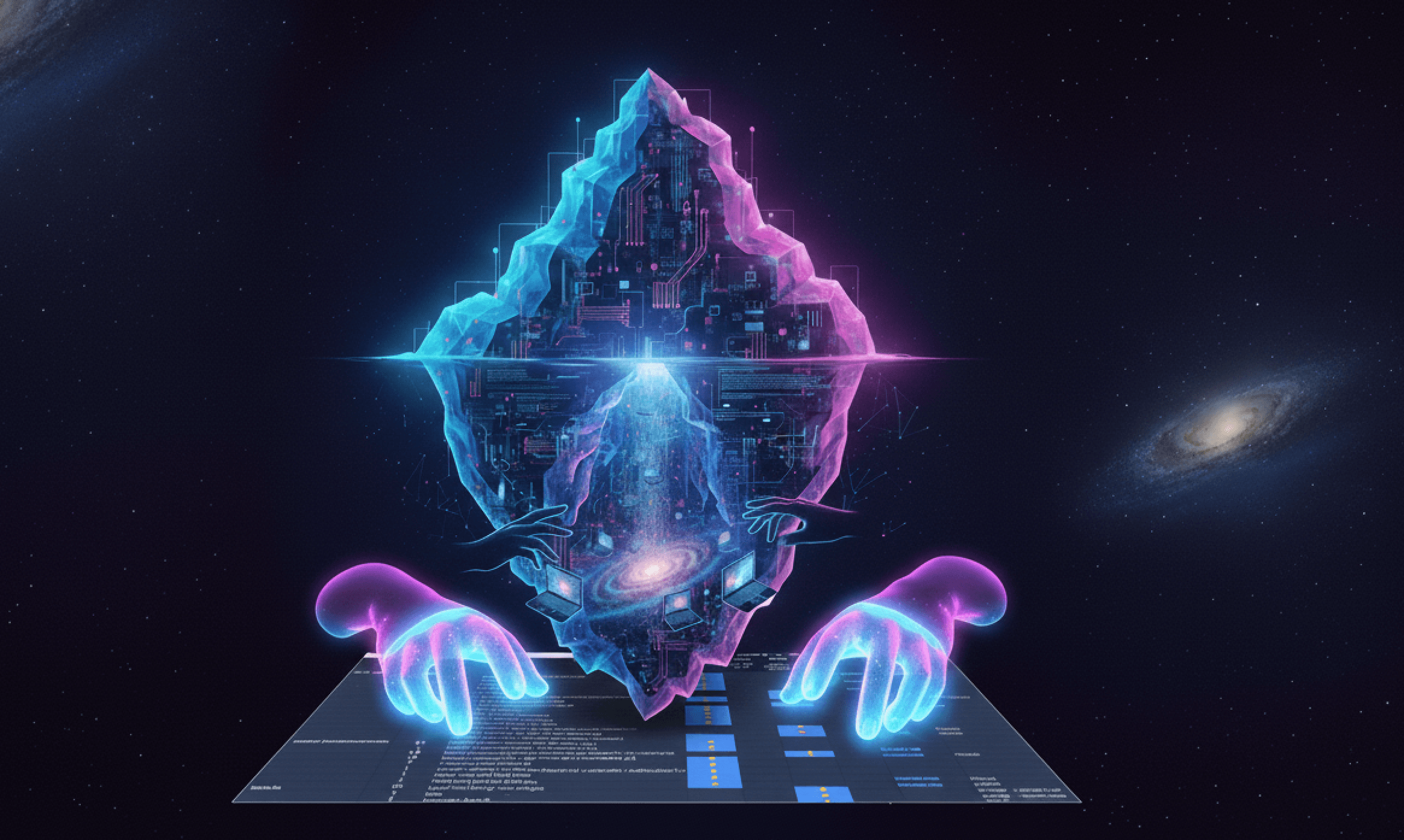

Mixture of Agents Enhances LLM Capabilities

How the Mixture-of-Agents framework boosts AI performance by making models collaborate instead of competing.

Mixture of agents enhances LLM capabilities

In this episode of AI Paper Bites, we break down the Mixture-of-Agents (MoA) framework—a novel approach that boosts LLM performance by making models collaborate instead of competing.

Diversity for Better AI Decisions

Think of it as DEI for AI: diverse perspectives make better decisions! This framework challenges the conventional wisdom that bigger individual models are always better.

Key Innovations

- Instead of one massive model, MoA layers multiple LLMs to refine responses

- Different models specialize as proposers (idea generators) and aggregators (synthesizers)

- More model diversity = stronger, more balanced outputs

As they say, if you put a bunch of similar minds in a room, you get an echo chamber. But if you mix it up, you get innovation!

The AI-native lesson

Could the future of AI be less about bigger models and more about better teamwork? The MoA framework suggests that collaborative AI systems might outperform single monolithic models, even when the individual components are less powerful.

Episode Length: 7 minutes

Listen to the full episode on Apple Podcasts.

Get more frameworks like this

Practical AI strategy for executives. No hype, just real playbooks.

SubscribeYou might also like