Most AI Training Programs Are Failing. Here's What Actually Works.

Most corporate AI training teaches prompting, not judgment. After running enablement programs across two companies, here's the $5/$50/$500 framework, the three failures that kill AI initiatives, and why leaders have to go through training first.

TL;DR

Most corporate AI training teaches people which buttons to click, not when to trust the output. After running enablement programs across two companies, I've found that the bottleneck isn't tool access. It's problem-solving methodology, management, and the willingness to make participation mandatory. Here's what drives real adoption and what quietly kills it.

Every organization is deploying AI. Almost none are teaching people how to use it well.

The gap isn't tool access. Most employees can reach ChatGPT, Copilot, or Claude in seconds. The gap is judgment: knowing when AI output is reliable, when it needs verification, and when it's confidently wrong. We've handed people a powerful instrument without teaching them to read the sheet music.

This matters because AI is a multiplier, not a replacement. It multiplies existing capability. If someone can't decompose a complex problem into smaller parts, select the right approach for each, and verify the output, then better AI tools change nothing. You can multiply zero by as much as you want.

The result without proper education: employees who trust outputs they shouldn't, generate content no one reads, and can't tell the difference between a useful response and a convincing one. From data leaks to hallucinations to what I call "workslop" (low-quality AI-generated content that creates more work than it saves), the risks compound fast.

Expect AI workplace training to become as standard as phishing awareness programs. This isn't optional. The risks are too high, and the benefits too significant to leave to chance.

It's a methodology problem, not a tooling problem

Most AI enablement programs fail because they treat the problem as a tooling problem. They teach people ChatGPT, run a few workshops, declare success, and nothing changes.

I've seen this firsthand. At HG Insights, we ran AI enablement sessions in Q4 2025 that produced a bimodal outcome: the people who were already strong operators got significantly stronger. Everyone else went through the motions. Tool exposure did not produce behavior change.

The core mistake is teaching prompting without teaching problem decomposition. Showing someone how to write a prompt is easy. Understanding that your sales team spends three hours a week prepping pipeline reviews, and that a specific AI workflow can cut that to 30 minutes, requires you to deeply understand each team's workflows and map AI capabilities to specific tasks. Without that mapping, AI training becomes another corporate checkbox.

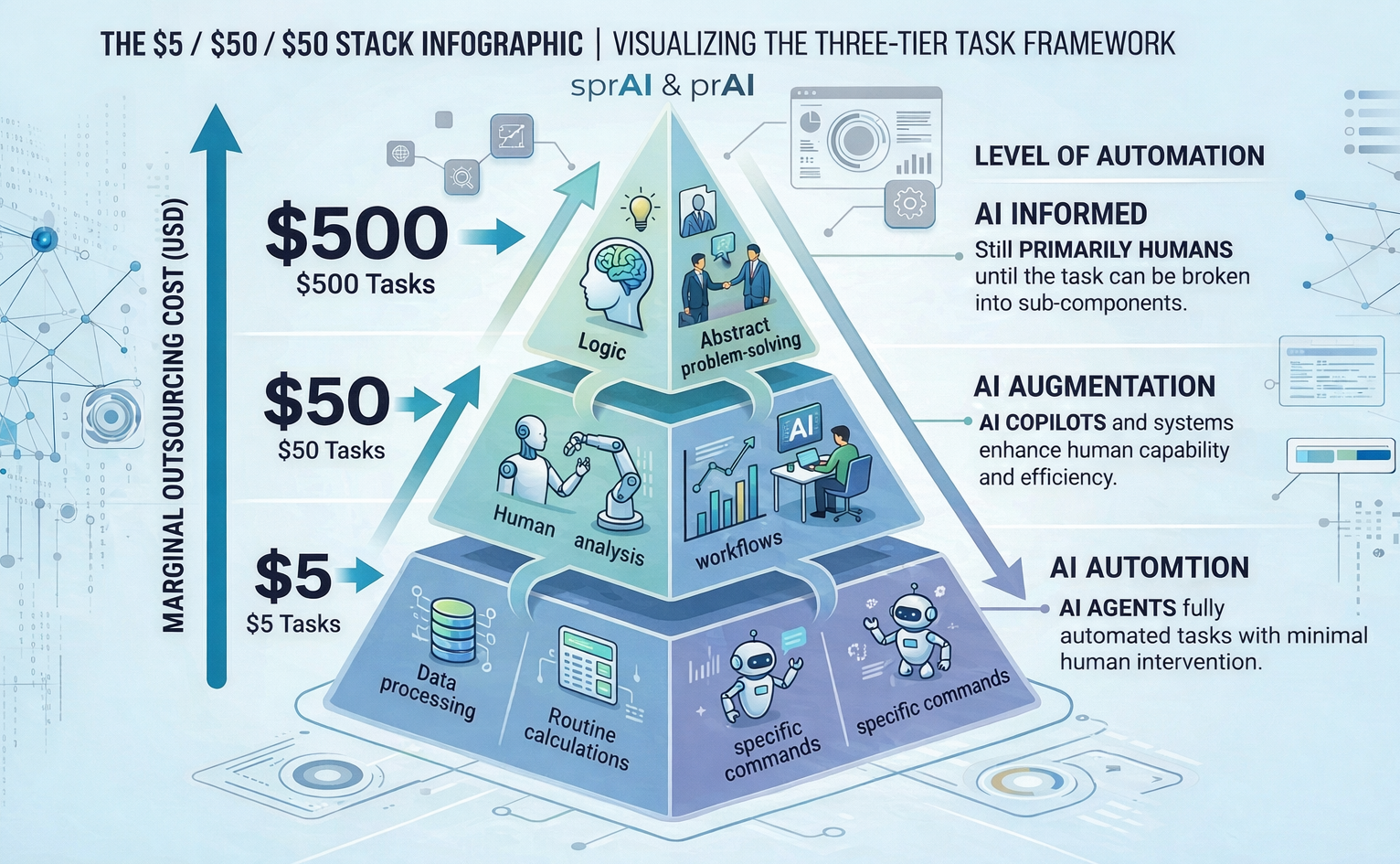

A useful mental model is the $5/$50/$500 framework. Categorize every task by its value:

- $5 tasks (inbox triage, scheduling, follow-ups): automate by default.

- $50 tasks (account research, call prep, email drafting): AI-assisted, human-reviewed.

- $500 tasks (strategy, negotiation, executive relationships): human-led, AI-informed.

Success looks like time migrating up this stack. People spending less time on $5 work and more on the $500 work that actually moves the business. If your training doesn't help people see their job through this lens, it's a tool demo, not enablement.

The tools that gain the most traction are horizontal ones that solve universal pain points. SuperWhisper, a dictation tool, has been particularly effective because it removes the drudgery of typing long messages or prompts. People talk to their computer and immediately grasp how AI can eliminate mundane work. But this only lands when you understand what people actually do day to day.

What drives real adoption

Three strategies consistently move the needle.

Find skeptics who become converts and use them as evangelists. They understand the resistance because they shared it. Their conversion stories resonate with colleagues in a way that top-down mandates never will. At MadKudu, some of our strongest AI advocates were initially the most resistant. Once they found a workflow that saved them real time, they became more credible champions than anyone on the AI team could have been.

Create structured, mandatory hands-on time. At MadKudu, we ran weekly hackathons where people built with AI tools in practical settings. Not lectures, not slide decks: building real workflows. This required someone on the AI forefront to evangelize and demonstrate what's possible, but the adoption that followed justified the investment. The key word is "mandatory." Voluntary programs produce enthusiasm in the already-enthusiastic and nothing in everyone else.

Leaders have to go first. This is the one most companies skip. If managers aren't AI-native themselves, they can't evaluate whether their teams are using AI effectively, they can't coach someone on better approaches, and they can't tell the difference between good AI-augmented work and workslop. The teams whose leaders weren't AI-enabled saw the least behavior change, even when individual contributors had strong tool exposure. Leaders set the ceiling. If they operate in the old world, their teams will too.

The three failures that quietly kill AI programs

I've seen three patterns sink otherwise promising AI initiatives.

Recruiting fakers. People who talk confidently about AI usage but can't solve problems when tested. They understand concepts but don't apply them. Once embedded in teams, they set the wrong example and they're hard to remove. The fix: test candidates on real problem-solving with AI tools, not just their ability to discuss the technology.

The 80% problem. AI makes it easy to go from zero to 80% on a project. That initial burst gets people excited. But they abandon the 80% to 100% phase, which requires iteration with users and customers. That's where projects die, and it's often where the real value lives. The last 20% of development might deliver 80% of the value.

Workslop. People adopt AI for document generation and produce long, low-information-density documents they don't even read themselves. This drowns organizations in predicted tokens rather than actual insights. It needs to be called out explicitly at every level: generating unedited, low-value content is unacceptable, regardless of seniority. Leaders should measure process, not just output. The bar isn't "did AI produce something." The bar is "did a human use AI to produce something better than they could have alone."

Measure process, not just output

The biggest mistake organizations make with AI programs is measuring the wrong thing. Most track adoption metrics: how many people logged in, how many prompts were sent, how many documents were generated. None of this tells you whether AI is making people better at their jobs.

What matters is whether people can decompose problems effectively, select the right tool for each sub-task, and apply judgment to the output. One approach: run a standardized assessment before and after training that tests resourcefulness and problem-solving with AI, not just tool knowledge. This produces a quantifiable baseline, measures whether the program actually improved capability, and makes skill gaps visible early.

If you only measure output, you incentivize people to use AI as a shortcut to produce mediocre work faster. Measuring the process rewards the right behaviors and makes it clear that "I used ChatGPT and it gave me this" is not what you're looking for.

A framework for effective AI education

Effective AI education should cover four areas:

- Problem decomposition. How to break any job into sub-tasks and decide which ones benefit from AI.

- Tool-to-workflow mapping. Connecting specific AI capabilities to the actual work each team does, not generic prompt training.

- Output judgment. Recognizing when AI output needs human verification and when a polished-looking response is masking inaccuracy.

- Quality standards. Internalizing a simple truth: a response that reads well isn't the same as a response that's correct. Workslop is never acceptable.

The multiplier thesis holds: AI amplifies existing capability, so education has to start with problem-solving methodology, not tool demos. Train around workflows using something like the $5/$50/$500 framework. Make it mandatory. Put leaders through it first. And measure whether people are getting better at their jobs, not whether they logged in. The question isn't whether organizations will use AI. It's whether they'll build the judgment to use it well.

Get more frameworks like this

Practical AI strategy for executives. No hype, just real playbooks.

SubscribeYou might also like